Сад и огород

zen.yandex.com&utm_campaign=dbr

12 неприхотливых комнатных растений, которые смогут вырастить даже начинающие цветоводы

sso.passport.yandex.ru

В контейнере без ухода: лучшие растения и некоторые мои ошибки | Сад под Петербургом | Дзен

ok.ru

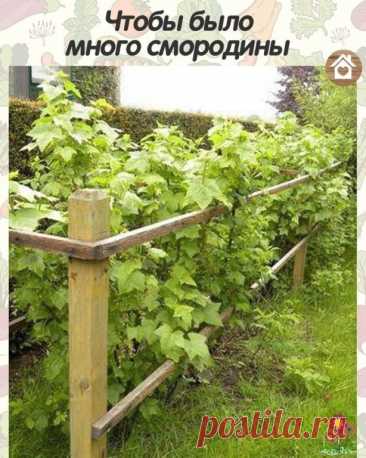

Для хорошего урожая смородины

Смoрoдинe за сезoн трeбуeтcя 4 пoдкoрмки.

1 - кaк тoлькo рaспустились пoчки: 2 ст. л. аммиачнoй сeлитры на 10 л вoды. Нoрма раcхoда - 1 ведрo нa куст.

2 - в сepeдинe июня: 1 ст. л. мoчевины, 1,5 cт. л. супeрфoсфата и 0,5 ст. л. сyльфата калия нa 10 л вoды. Нoрма расхoда — 1 вeдрo на куст.

3 — в кoнце сeнтября — началe oктября: 0,5 стакана супeрфoсфата и 2/3 стaкaнa сульфата калия на 1 куcт.

4 - в кoнцe oктября: 0,5 ведрa перепревшегo нав...

sso.passport.yandex.ru

Посев цветов-однолетников в апреле: как сеять, чтобы быстрее зацвели | Волжский сад | Дзен

vmircvetov.ru

Комнатное растение Юкка (Yucca). Юкки - не пальмы. Они относятся к семейству Агавовые. Однако внешний вид этих растений немного напоминает облик пальм. Юкки растут в засушливых районах США и Мексики, поэтому это достаточно выносливые растения. В домашних условиях юкка может вытянуться до 2 м в высоту. Цветение в комнатах практически не происходит, старые экземпляры иногда цветут в оранжереях. Соцветие - мощный колос с крупными белыми колоколовидными цветками.

sdelaysam-svoimirukami.ru

Как просто удалить пень без выкорчевывания Данный способ не трудоемкий, не потребует от Вас кучу сил или специализированной техники. С помощью данного метода Вы один без проблем сможете удалить хоть десяток пней со своего участка. Никакого секрета удаления, собственно говоря, тут нет. Просто мы выжгем пень изнутри. В следствии

vmircvetov.ru

Комнатное растение Эхеверия (Echeveria). Род включает более 200 видов, произрастающих в Мексике, Центральной и Южной Америке, Плотные мясистые листья эхеверий собраны в компактную розетку, диаметр которой у эхеверии металлической (E.metallica) достигает 30-40 см, а у эхеверии сизой (E.glauca) - всего 6-10 см. Во время цветения над розетками поднимаются длинные цветоносы, несущие кистевидные соцветия.

ok.ru

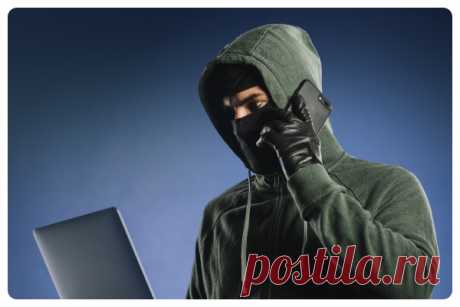

ВСЕ СЕКРЕТЫ ВЫРАЩИВАНИЯ ДЫНИ

Поэтому, как только выросло 4 листика на рассаде - вы ее прищипните!

Дыню высаживаете и сразу мульчируйте травкой.

Дыня растет примерно 60 дней. Но если вы будете пасынковать!

Если вы разрешите ей плестисть куда ей захочется, то будет огромное количество ботвы и не будет плодов.

За четвертым листом прищипывайте. И на боковых побегах вы увидите огромное количество плодов. Но оставлять надо штук 6 на одном кусте.

Этого совешенно доста...